After watching the sci-fi movie ‘Passengers,’ I became intrigued with the business models that support the plot of movies like this. In turn, this triggered a Guest Blog post with Scientific American, which you can read here.

Category / Case Studies

Case study at the CDHB referenced in The Guardian

Ten years ago a small group of us started down the path of a large-scale transformation at the Canterbury DHB. Now this work has been hailed in The Guardian as something that the NHS should follow. Read the full details here .

NBR column – the state of AI

This is my NBR column from Feb 2017:

In June last year a fascinating aerial battle took place. It didn’t take place in the actual sky but rather in the virtual one, which was appropriate considering it was a battle of man against machine.

The man in question wasn’t an ordinary pilot but a retired US Airforce pilot, Gene Lee, with combat experience in Iraq and a graduate of the US Fighter Weapons School. The machine he was battling was a simulated aircraft controlled by an artificial intelligence (AI).

What was surprising about the outcome was that the artifical AI emerged as the victor. What was more surprising was that the computer running the software wasn’t a multimillion dollar supercomputer but one that used about $35 worth of computing power.

Welcome to the fast-moving world of AI.

It’s an area that has attracted significant media focus, and justifiably so. Experts in the field see the deployment of AI as the dawn of a new age. Andrew Ng, chief scientist at Baidu Research, is one of the gurus in the field.

“AI is the new electricity,” he says. “Just as 100 years ago electricity transformed industry after industry, AI will now do the same.”

Most of the current applications of AI focus on recognising patterns. Software is “trained” with vast amounts of information, usually with help from people who have manually tagged the data. In this way, an AI may start with images that have been labelled as cars, then, through trial and error guided by programmers, eventually recognise images of cars without any intervention.

Extraordinary breakthroughs

This simple explanation of AI belies the extraordinary breakthroughs achieved with this approach and is illustrated by an experiment conducted by an English company called DeepMind.

In 2015, DeepMind revealed that its AI had learned how to play 1980s-era computer games without any instruction. Once it had learned the games, it could outperform any human player by astonishing margins.

This feat is a stark contrast to the battle waged almost two decades ago when an IBM computer beat Russian grandmaster Gary Kasparov at chess in the mid-1990s. To beat him, the computer relied on a virtual encyclopaedia of pre-programmed information about known moves. At no point did the machine learn how to play chess.

Winning simple computer games clearly wasn’t enough to prove the abilities of DeepMind, so a more challenging option was found in the game called Go. It’s an incredibly complex Asian board game with more possible moves than the total number of atoms in the visible universe.

To learn Go, the AI played itself more than a million times. To put this in perspective, if a person played 10 games a day every day for 60 years, they would only manage to play around 180,000 games.

Despite the bold predictions of expert Go players, when the tournament ended in 2015, it was the DeepMind AI that had beaten one of the world’s best players.

The ability to “learn” can be easily leveraged into the real world. While gaming applications may excite hard-core geeks, DeepMind’s power was unleashed on a more useful challenge last year – increasing energy efficiency in data centres.

By looking at the information about power consumption – such as temperature, server demand and cooling pump speeds – the AI reduced electricity requirements for a Google data centre by an astonishing 40%. This may seem esoteric but around the world data centres already use as much electricity as the entire UK.

Potential implications

Once you start to consider the power of AI, the feeling of astonishment evaporates and is replaced with an unsettling feeling about the potential implications. For example, at the end of last year a Japanese insurance company laid off a third of one of its departments when it announced plans to replace people with an IBM AI. In this example, only 34 people were made redundant but this trend is likely to accelerate.

At this stage, it’s useful to put this development in context and consider what jobs might be replaced by AI. Andrew Ng has a useful rule of thumb – “If a typical person can do a mental task with less than one second of thought, we can probably automate it using AI either now or in the near future.”

What’s important about this quote is the term “near future.” Once you extend the timeline out longer, researchers have theorised that the implications of AI on the workforce are significant. One study published in 2015 estimated that across the OECD an average of 57% of jobs were at risk from automation.

This number has been disputed heavily since it was published but it doesn’t really matter what the exact percentage will be. What is important to keep in mind is that AI will change the nature of jobs forever, and it’s highly likely that work in the future will feature people working alongside machines. This will result in a more efficient workforce, which will in turn likely to lead to job losses.

However, it’s not just the workforce that could change. The potential for this technology dwarfs anything humans have ever invented, and, just like the splitting of the atom, the jury is out on how things will develop.

One of the world’s experts on existential threats to humanity – Nick Bostrom at Oxford University – surveyed the top 100 AI researchers. He asked them about the potential threat that AI poses to humanity, and responses were startling. More than half of them responded that they believed there is a substantial chance that the development of an artificial intelligence that matches the human mind won’t end up well for one of the groups involved. You don’t need to work alongside an AI to figure out which group.

The thesis is simple – Darwinian theory applied to the biological world leads to the dominance of one species over another. If humans create a machine intelligence, probably the first thing it would do is re-programme itself become smarter. In the blink of an evolutionary eye, people could become subservient to machines with intelligence levels that were impossible to comprehend.

The exact timeframe for this scenario is hotly debated, but the same experts polled by Bostrom thought that there was a high chance of machines having human-level intelligence this century – perhaps as early as 2050.

To paraphrase a well-worn cliché, we will live in interesting times.

Copyright NBR. Cannot be reproduced without permission.

Read more: https://www.nbr.co.nz/opinion/keeping-eye-artificial-intelligence

Follow us: @TheNBR on Twitter | NBROnline on Facebook

NBR Column – driverless cars

This is my NBR column from December 2016:

Since the invention of the first “horseless buggy” in 1891, there haven’t been many significant changes to the basic of the car. There have been incremental improvements to the platform – such as better engines, increased safety and more comfort – but the core has remained unchanged. A driver from 1920 would be able to adapt to a modern car and the reverse would also apply.

While a driver from the 1920s would be able to drive a car, a mechanic from the same era would no longer recognise the key components. Today’s new cars are equipped with collision avoidance sensors, traction control, ABS, air bags, reversing cameras, engine computers and media players. This technology means that new vehicles contain more software than a modern passenger aircraft and a laptop is more useful than a wrench when tinkering under the hood.

While this may be startling to some people, it pales into insignificance compared to what’s about to happen to the car when driverless vehicles become mainstream.

Since their first significant debut in 2004, driverless cars have evolved quickly. They have now been demonstrated in a range of situations, with manufacturers posting videos online showing just how well their machines work (usually in near-perfect conditions).

These advances have been enabled by developments in sensors, cameras and computing power. On their own, each of these required technologies was prohibitively expensive only a decade ago. Fast forward to now, however, and the cost has fallen to the point where it’s feasible to bundle them into a car.

For example, one of the key components is a device called a LIDAR which creates a millimetre accurate map of the world around the car. Early versions of LIDAR systems fitted on a car cost $75,000. Just last week one manufacturer announced a version with similar capabilities that would cost about $50.

Implications for ownership

While a lot of attention is on the technology in the car, most astute analysts are focused on the second and third tier implications of driverless vehicles. This is the most interesting part of the discussion because cars are ubiquitous in most urban environments, and a change in their form and function has massive implications.

The most significant implication will concern the very notion of car ownership.

A car is one of the most expensive assets in a household but at the same time it’s also one of the least used. Most a car’s life is spent stationary, though the cost of ownership is justified through what it creates.

In modern society a car creates access to opportunity, and for cities without an efficient mass transit system, car ownership is the way people access opportunity.

However, the notion of car ownership is being questioned in some cities and people have calculated that using a car-sharing service is cheaper than owning a car in some situations. Driverless cars are the next evolution of on-demand mobility without requiring ownership.

The most likely scenario to emerge in cities is that private car ownership will dwindle, and the demand for mobility will be met by fleets of vehicles available on demand and tailored to your requirements.

For example, a two-seater car could take you to a meeting, while a people carrier may stop past your house in the morning to collect your kids and take them to school.

Eliminating road congestion

Once you have a network of fleets running in a city, and every car is sending data about its state, it then becomes possible to optimise roads in a way that’s simply not possible now. When you know exactly how many cars are on the road at any one time and where they are going, you can start to organise their routes in such a way that eliminates congestion.

Another implication of driverless cars is the remodelling of city streets to remove carparks – cars without drivers never need to be parked for hours on the kerbside.

The biggest benefit of driverless cars is likely to be the near elimination of road accidents. A car that’s operated by a computer will never get distracted by phone calls or fall asleep at the wheel. Some researchers have predicted that driverless cars have the potential to reduce road deaths by up to 90%.

Regulating for driverless cars is one of the biggest hurdles to their adoption, and for this reason uptake on private roads (which are free of regulation) has already begun.

To illustrate, some Australian mines have operated driverless trucks since 2008, and since their introduction productivity has increased and accidents have decreased. In New Zealand one of the first significant pilots of driverless vehicles will take place in 2017 when Christchurch airport will introduce a driverless shuttle bus on its private roads.

In the next few years the workforce will start to be impacted by this technology, with truck drivers likely to be affected first. Already a delivery truck owned by an Uber subsidiary has driven almost two hundred kilometres across the US on interstate highways in self driving mode. This has profound implications for the three million truck drivers employed in the US and the industries that support them.

The next decade will be a transition period where driverless vehicles start to become commonplace in some situations. They’re unlikely to be widespread in cities as many experts believe that there are very hard problems that still need to be solved. For this reason it won’t be until after 2025 that we’re likely to see a dramatic change in the transportation fleet.

What makes this timeframe interesting, is that unlike many technology driven changes that have slowly changed business, this one is clear to see. Organisations that have the foresight to leverage insights about the changes created by driverless cars will do extremely well. Those that don’t will end up like the horseless buggy.

Copyright NBR. Cannot be reproduced without permission.

Read more: https://www.nbr.co.nz/opinion/fast-forward%C2%A0normalisation-driverless-cars-not-so-far

Follow us: @TheNBR on Twitter | NBROnline on Facebook

NBR – Monthly column

I’ve started writing a monthly column for a business weekly in New Zealand called The National Business Review. The first column was online recently and looked at the Singularity Summit in NZ, and set the tone for future columns.

I’ve started writing a monthly column for a business weekly in New Zealand called The National Business Review. The first column was online recently and looked at the Singularity Summit in NZ, and set the tone for future columns.

The column can be on the NBR site here or below:

Not many conferences in New Zealand attract more than 1400 people. Even fewer – perhaps none – of this size include a diverse range of professional directors, politicians, chief executives, teachers, university students, entrepreneurs and school pupils.

One that did was the three-day Singularity Summit in Christchurch. On stage were experts from Silicon Valley and New Zealand discussing how rapidly changing technologies would affect the world.

What was startling for many attendees is that many of these disruptive innovations aren’t vague predictions but are already in use – or about to be.

Science fiction author William Gibson once famously remarked that the future is already here – it’s just unevenly distributed. The truth of this was highlighted at the summit as speakers gave example after example of how entire industries are going to be upended as technology advances.

Given the audience size, this is clearly a hot topic and something that a lot of people are grappling with.

On the last day of the summit I talked to David Roberts, the opening and closing speaker, to get his insight on the level of interest.

“I think there really is something happening right now,” Roberts says. “My sense is that we’re at an inflection point.”

The international speakers were well placed to observe inflection points as many of them are members of the Singularity University – a think tank based in the heart of Silicon Valley. The name has its origin in a concept that speculates artificial intelligence will surpass human intelligence in the next few decades, leading to a technology singularity where computers outperform people.

While the concept of the singularity is controversial, it’s clear the world our children will inherit will have a dramatically different working environment to the one we know today.

Software running on extremely fast computers can already perform better than humans in a range of intricate tasks, including driving cars, flying planes and playing complicated games.

Technology has enabled some startling developments.

University of Auckland researcher Mark Sagar began his presentation with a relatively dry discussion about creating computing “building blocks” for designing virtual avatars.

His work aims to create super-realistic computer-generated faces that respond to external stimuli just like a real person.

For example, staying within the view of a laptop camera means that the software can “see” a human face. This then triggers the software model to release virtual oxytocin, a neurochemical that is related to trust and bonding.

The end result is that the virtual face – which is controlled by the virtual brain – starts to smile.

“It’s like a Lego system for building brains,” he casually mentioned just before he showed the audience exactly what he meant.

At this point it’s fair to say Dr Sagar is a man who knows how to capture your attention. When he demonstrated the end result on screen there was an audible gasp as the audience watched him interact with an extraordinarily life-like baby – or at least its face.

Using only his laptop, Dr Sagar’s virtual baby smiled when it was talked to and got anxious when he moved out of camera view. Although it couldn’t “see’ the audience” if it could it would have seen 1400 jaws drop open.

Plenty of other jaw-dropping moments occurred during the event and at the end of the three days it was certain few organisations would be immune to an increasing pace of technology change.

While making predictions about the future is notoriously difficult, from a strategic standpoint it’s increasingly important to develop the capability to have an over-the-horizon view.

In a series of monthly columns I will take a closer look at some of the risks and opportunities presented by rapidly changing technology in areas such as driverless cars, artificial intelligence, employment, politics and the role of New Zealand organisations.

Copyright NBR. Cannot be reproduced without permission.

Read more: https://www.nbr.co.nz/opinion/fast-forward%C2%A0-roger-dennis-hold

Follow us: @TheNBR on Twitter | NBROnline on Facebook

Scanning to stop being ‘Ubered’

Over the last couple of years, and connected to the work on Sensing City, I’ve been advising Infratil on technology advances that could disrupt their business or provide opportunity. This was referenced in the annual meeting where the Chair, Mark Tume said

…the challenge was to stop their investments from energy to the retirement sector getting “Uber-ed” or being able to “Uber” some else, in a reference to the Uber taxi service. Infratil would not be able to recognise the next Uber, Tume said and it was “incredibly difficult” to pick winners in new technology.

But Infratil’s board and management had to be open to the risks and how to react. “So we don’t act like possums in the headlights” he said.

For example, Infratil had seen the rapid rise of solar power in Australia and the potential for new battery technology which would change the power sector landscape.

“The one I’m scared of is the one that blind-sides you”

Many organisations are increasingly susceptible to being blindsided, but only a few – like Infratil – have the foresight to seek to understand the risks and opportunities.

Update – Sensing City

About half of my time is now being spent on the Sensing City project. As a result updates to this blog will be infrequent as the project gathers pace. In the meantime you can also monitor progress on the Sensing City FaceBook page. Thanks.

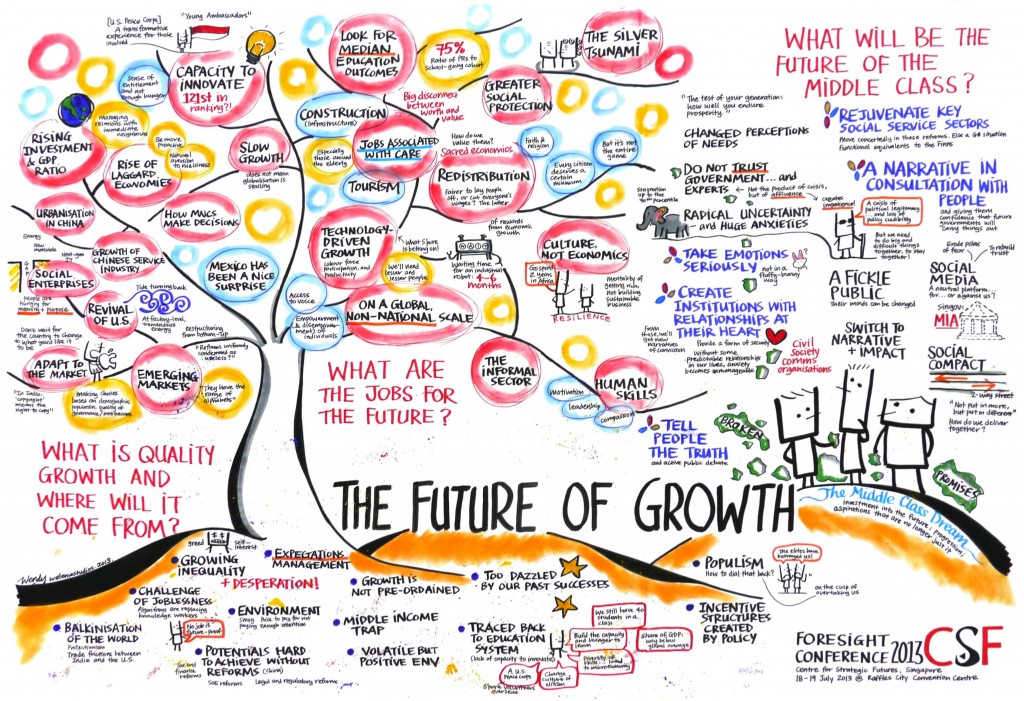

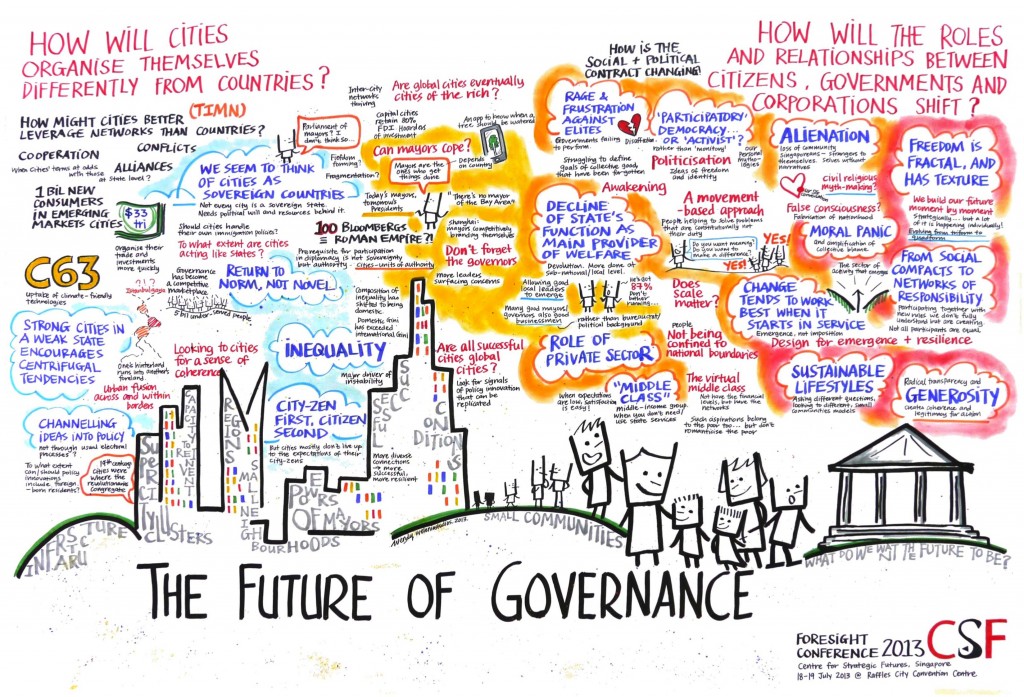

Key insights from Singapore Foresight Week 2013

In 2011 the Prime Ministers office in Singapore sponsored a week of foresight conversations. This year saw the next iteration and I was invited back to the conversation. Once again there were about twenty of us from around the world that were invited, and the diversity of the conversation was only trumped by the quality. My notes are in mind-map form, and therefore I’m going to post some images from the event along with some insights and summation.

Firstly – the pictures:

Insights (in no order)

- It’s strategically important to have a good imagination and an adaptable mind.

- Most decision makers want simple answers, and ask the wrong questions. They want an answer, but in complex environments there may not be a simple answer.

- there is book called “Future Babble” that looked at previous predictions of the future, and found that the most inaccurate predictions were the ones that were most convinced of their accuracy.

- the real lack of skills in the world is the lack of generalists

- The waiting time to purchase a new industrial robot is 4-6 months.

- People are hard wired to take more notice of failure than success – from an evolutionary point of view it’s more important

- More insights are on my Twitter feed

Summary

To try to summarise the week is to fall into the trap of thinking conversation is a linear process. The discussions were so varied it’s almost impossible to bring it together, however the most important points for me related to foresight, policy and governance:

The world is becoming increasingly complex, and as a result leaders need to be adept at understanding that decision making can not always be causal. In order to make good decisions you need firstly to understand the environment you’re working in and Dave Snowden provides guidance here with his framework:

Dave Snowdens Cynefin (kin-are-fin) Framework

If you find yourself on the left hand side of the framework then you need to understand complexity theory, and acknowledge that there may be no right answer. That’s not easy for decision makers raised to believe that they need to make fast decisions based on minimal information.

I’ll close with a wonderful analogy that was provided in a conversation about the work of Karl Popper: you can begin to understand complexity through the lens of clouds vs clocks. You can take apart a clock to understand it, but to understand clouds you need to look at many different variables. Clouds cannot be taken apart.

Health project in the news

It’s been a long and very rewarding journey working with the Canterbury District Health Board (Christchurch, New Zealand) and the fruits of the labour are starting to be born. One of the latest projects to come out of long term transformation thinking was featured on a local news channel, and can be seen below: